Use k8saas with a managed database

Introduction

Usually the digital products are composed of multiples components:

- The front end that allows a user to interact with the service with a graphical interface.

- The back end that exposes APIs that are consumed by the front end or external components.

The micro-services architecture led to consistent services that often use a piece of code and one or multiple databases. In most of the cases, and if you are reading this documentation: your application is fully containerized like the following schema:

Pros: This architecture is quite good for developing fast and to get the maximum of autonomy for a development squad. The usage of container facilitates the software development and deployment by using the CI/CD methods.

Cons: To go to demo or to prod, you need implement additional security requirements such as strong authentication for kube config, security monitoring & alerting, secured the communication between pods, generates certificate to expose your application in HTTPS etc.... At the end of the day, all those industrialization activities are mandatory to go to production but do not provide end-users features and are time-consuming.

Because you're on the TDP, core-services are here to accelerate the development of features. With the Kubernetes as a services (or K8SaaS), a major part of security requirements are already implemented and managed by a transversal team. You could adapt your deployment architecture with the following diagram:

Pros: In built security mechanism are implemented such as confidentiality between containers, security operation of the clusters (security alerting), TLS certificates generation, application monitoring. To get the full list of services provided by the service, please go to the home page.

Cons: The Thales BL developer or partners are responsible to maintain other components such as the databases, the caching mechanisms, meaning: managing the backups, monitoring the capacity planning, patching the vulnerabilities. Those activities are time consuming and do not provide any value to the final users.

Advice: At the Digital Factory, we recommend to use as much as possible the managed services by Azure because they provide SLA and facilitate the RBAC implementation and the management of the security. For instance, use Azure Database for MySQL documentation | Microsoft Docs

Finally, we recommend to following architecture for your digital product:

Content of this Tutorial

Step 1

- Deploy CosmoDB

- Access to your cosmoDB from rebound-vm

- [Optional] Try to access to the database from your laptop and understand the error

Step 2

- Ask for a private endpoint for k8saas

- Dockerize your application

Architecture

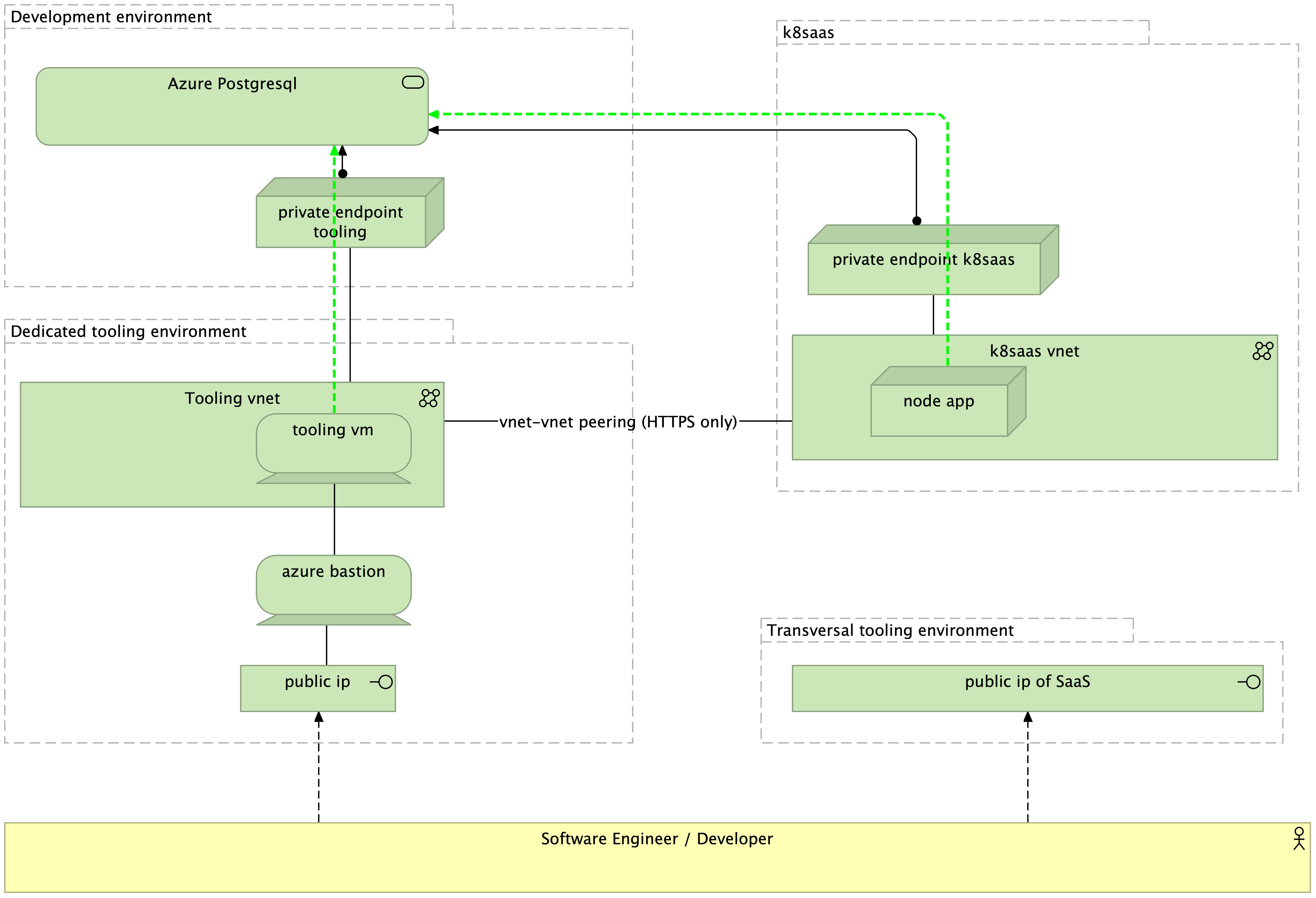

The high level application architecture of this tutorial is described here:

As you notice, in opposition to BYOD use case, the communication between the software engineer laptop is forbidden. (red arrow). To access to the application, you should use azure bastion.

The high level technology architecture of this tutorial is described here:

Step 1

Prerequisites

You should have:

- an azure subscription in the trustnest platform

- define a naming convention for the cloud resources

MANDATORY:

- DO NOT USE the ip ranges: 10.50.0.0/16 and 10.51.0.0/16

Note: If your infrastructure use one of the previous IP ranges, the peering between your vnet and k8saas is impossible.

Advices:

- Naming convention. We recommend to use:

<entity-name>-<project-name>-<what_you_want>-<environment>-<type_of_cloud_resource>

We are going to create the following resources:

- A cosmoDB database

- 2 privates endpoints

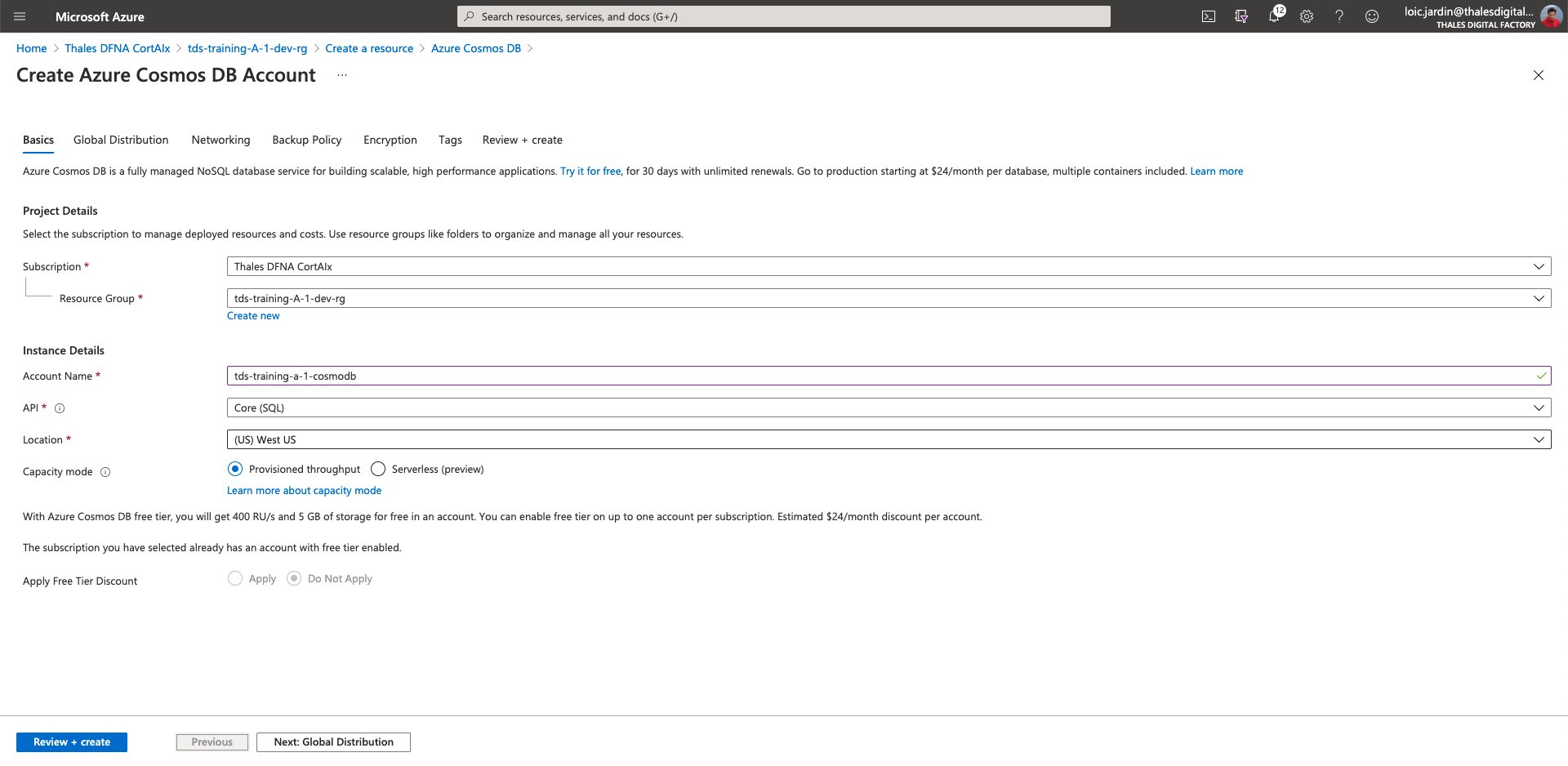

Deploy CosmoDB

- First go to your development resource group

- Click on "Add" at the top

- Then "Marketplace"

- Search for "Azure CosmoDB"

- Then "Create"

- Account Name:

tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM> - API: Core (SQL)

- Location: (US) East US

- Capacity mode: Provisioned throughput

- Click on "Next: Global Distribution"

- Click on "Next: Networking"

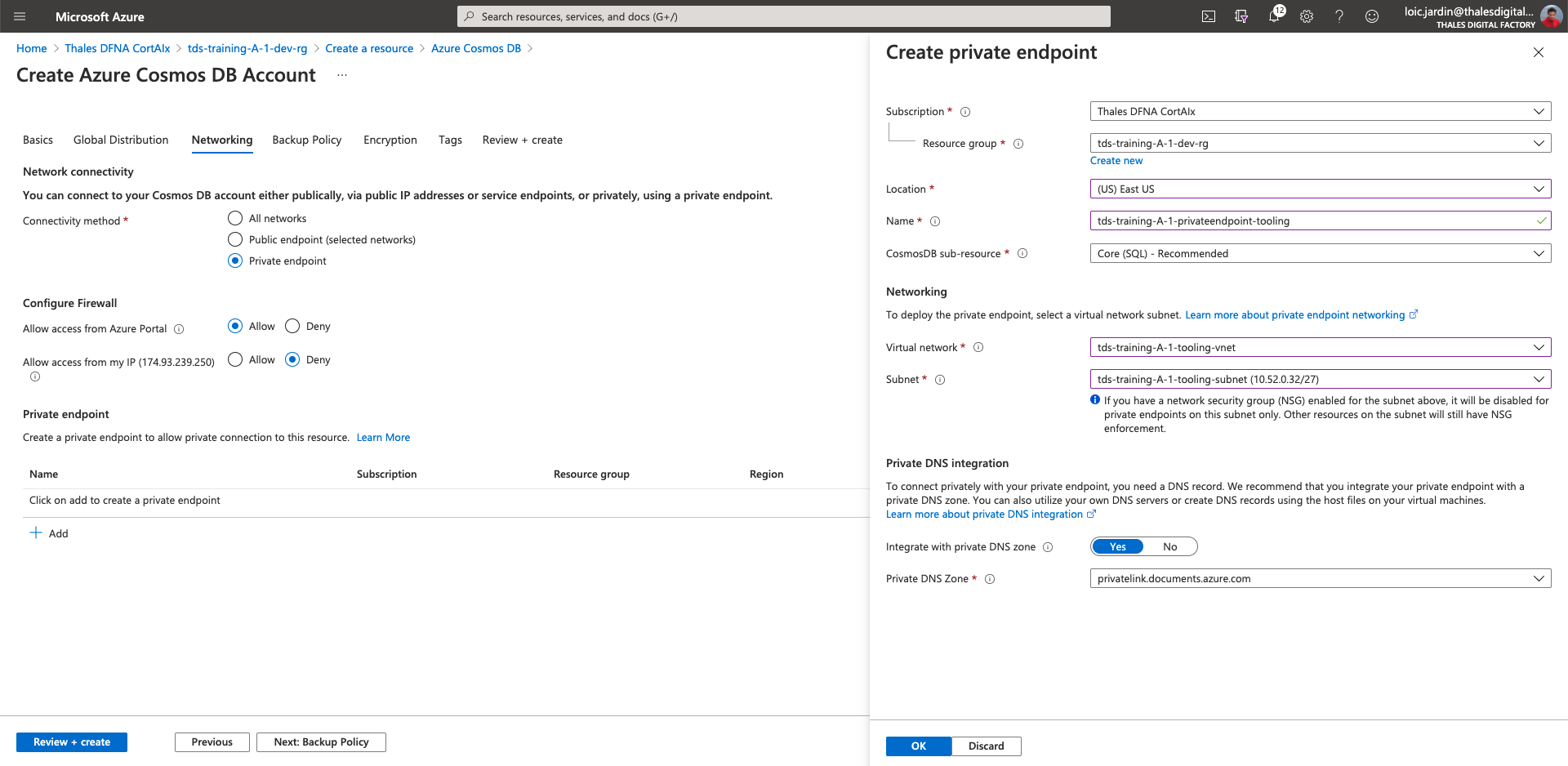

- Connectivity method: Private Endpoint

- Allow access from Azure Portal: Allow

- Allow access from my IP: Deny

- In the Private endpoint section, click on Add

Then, fill the fields with the information:

- Location (US) East US

- Name:

tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-privateendpoint-tooling - CosmoDB sub-resource: Core (SQL) - Recommended

- Virtual network:

tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-vne - Subnet:

tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-tooling-subnet

- Click on "Review + create"

- Then "Create"

Access to your cosmoDB from rebound-vm

- Access to your rebound-vm using the bastion.

- Then execute the following command

# clone the getting stated project

$ git clone https://gitlab.thalesdigital.io/platform-team-canada/k8saas-innersource/azure-cosmos-db-sql-api-nodejs-getting-started.git

Cloning into 'azure-cosmos-db-sql-api-nodejs-getting-started'...

Username for 'https://gitlab.thalesdigital.io': loic.jardin@thalesdigital.io

Password for 'https://loic.jardin@thalesdigital.io@gitlab.thalesdigital.io':

remote: Enumerating objects: 109, done.

remote: Counting objects: 100% (109/109), done.

remote: Compressing objects: 100% (58/58), done.

remote: Total 109 (delta 45), reused 109 (delta 45), pack-reused 0

Receiving objects: 100% (109/109), 36.50 KiB | 491.00 KiB/s, done.

Resolving deltas: 100% (45/45), done.

$ cd azure-cosmos-db-sql-api-nodejs-getting-started

$ vim config.js

You should see:

// @ts-check

const config = {

endpoint: "<Your Azure Cosmos account URI>",

key: "<Your Azure Cosmos account key>",

databaseId: "Tasks",

containerId: "Items",

partitionKey: { kind: "Hash", paths: ["/category"] }

};

module.exports = config;

In another tab or window, go to your cosmo DB, then

- Go to "Keys" section

- the endpoint (previous config.js file) is the "URI" field:

https://tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-cosmodb.documents.azure.com:443/ - the key (previous config.js file) can be the PRIMARY or the SECONDARY KEY

Update your config.js file with the data

Once done:

# install npm

$ sudo apt install npm

[...]

# install dependencies

$ npm install

├─┬ @azure/cosmos@3.5.2

│ ├── @types/debug@4.1.5

│ ├─┬ debug@4.3.1

│ │ └── ms@2.1.2

│ ├── fast-json-stable-stringify@2.1.0

│ ├── node-abort-controller@1.2.1

│ ├── node-fetch@2.6.1

│ ├─��┬ os-name@3.1.0

│ │ ├── macos-release@2.4.1

│ │ └─┬ windows-release@3.3.3

│ │ └─┬ execa@1.0.0

│ │ ├─┬ cross-spawn@6.0.5

│ │ │ ├── nice-try@1.0.5

│ │ │ ├── path-key@2.0.1

│ │ │ ├── semver@5.7.1

│ │ │ ├─┬ shebang-command@1.2.0

│ │ │ │ └── shebang-regex@1.0.0

│ │ │ └── which@1.3.1

│ │ ├─┬ get-stream@4.1.0

│ │ │ └─┬ pump@3.0.0

│ │ │ ├── end-of-stream@1.4.4

│ │ │ └─┬ once@1.4.0

│ │ │ └── wrappy@1.0.2

│ │ ├── is-stream@1.1.0

│ │ ├── npm-run-path@2.0.2

│ │ ├── p-finally@1.0.0

│ │ ├── signal-exit@3.0.3

│ │ └── strip-eof@1.0.0

│ ├── priorityqueuejs@1.0.0

│ ├── semaphore@1.1.0

│ ├── tslib@1.14.1

│ └── uuid@3.4.0

└─┬ cross-env@7.0.3

└─┬ cross-spawn@7.0.3

├── path-key@3.1.1

├─┬ shebang-command@2.0.0

│ └── shebang-regex@3.0.0

└─┬ which@2.0.2

└── isexe@2.0.0

npm WARN azure-cosmosdb-sql-api-nodejs-getting-started@0.0.0 No repository field.

npm WARN azure-cosmosdb-sql-api-nodejs-getting-started@0.0.0 No license field.

# execute code

$ node app.js

Created database:

Tasks

Created container:

Items

Querying container: Items

Created new item: 3 - Complete Cosmos DB Node.js Quickstart ⚡

Updated item: 3 - Complete Cosmos DB Node.js Quickstart ⚡

Updated isComplete to true

Deleted item with id: 3

DONE, you script has access to the cosmodb database, and you have perform several actions.

[Optional] Try to access to the database from your laptop and understand the error

Because of the security bubble, it's not possible to access to the database from your laptop.

Let's try regardless,

You can follow the same steps as previously.

When I run the command I get:

loicjardin@TDS-Loics-MacBook-Pro azure-cosmos-db-sql-api-nodejs-getting-started % node app.js

(node:95595) UnhandledPromiseRejectionWarning: Error: Request originated from client IP 174.93.239.250 through public internet. This is blocked by your Cosmos DB account firewall settings. More info: https://aka.ms/cosmosdb-tsg-forbidden

ActivityId: 9f82c75c-3a58-47f2-9d52-e9aea780c2a1, Microsoft.Azure.Documents.Common/2.11.0

at /private/tmp/azure-cosmos-db-sql-api-nodejs-getting-started/node_modules/@azure/cosmos/dist/index.js:6962:39

at Generator.next (<anonymous>)

at fulfilled (/private/tmp/azure-cosmos-db-sql-api-nodejs-getting-started/node_modules/tslib/tslib.js:107:62)

at processTicksAndRejections (internal/process/task_queues.js:93:5)

(node:95595) UnhandledPromiseRejectionWarning: Unhandled promise rejection. This error originated either by throwing inside of an async function without a catch block, or by rejecting a promise which was not handled with .catch(). (rejection id: 1)

(node:95595) [DEP0018] DeprecationWarning: Unhandled promise rejections are deprecated. In the future, promise rejections that are not handled will terminate the Node.js process with a non-zero exit code.

Why ?

Let's test some naming resolution:

On my laptop:`

loicjardin@TDS-Loics-MacBook-Pro azure-cosmos-db-sql-api-nodejs-getting-started % nslookup tds-training-a-1-cosmodb.documents.azure.com

Server: 192.168.2.1

Address: 192.168.2.1#53

Non-authoritative answer:

tds-training-a-1-cosmodb.documents.azure.com canonical name = tds-training-a-1-cosmodb.privatelink.documents.azure.com.

tds-training-a-1-cosmodb.privatelink.documents.azure.com canonical name = cdb-ms-prod-westus1-fd56.cloudapp.net.

Name: cdb-ms-prod-westus1-fd56.cloudapp.net

Address: 40.112.241.103

So I get a public IP -> forbidden by default

From the rebound vm:

tds-admin@tds-training-A-1-rebound-vm:~/azure-cosmos-db-sql-api-nodejs-getting-started$ nslookup tds-training-a-1-cosmodb.documents.azure.com

Server:127.0.0.53

Address:127.0.0.53#53

Non-authoritative answer:

tds-training-a-1-cosmodb.documents.azure.comcanonical name = tds-training-a-1-cosmodb.privatelink.documents.azure.com.

Name:tds-training-a-1-cosmodb.privatelink.documents.azure.com

Address: 10.52.0.37

Now I get a private ip -> because of the private endpoint and it's private DNS zone.

Step 2

We are going to create the following resources:

- ask for a private endpoint for k8saas

- a new container in nodejs that use the cosmoDb

- a pullSecret in kubernetes to pull the image

Ask for a private endpoint for k8saas

Please ask for a private endpoint of CosmoDB to tds-training-sandbox k8saas cluster.

What to do ?

- Please, go to the TrustNest K8SaaS Service catalog

- asking for peering

- precise the name of the k8saas cluster your want to peer

- precise : subscription, resource_group, cosmodb name to be interconnected

Only HTTPS is allowed by default.

Once the peering done, you can remote the Network Contributor roles of the cosmoDB

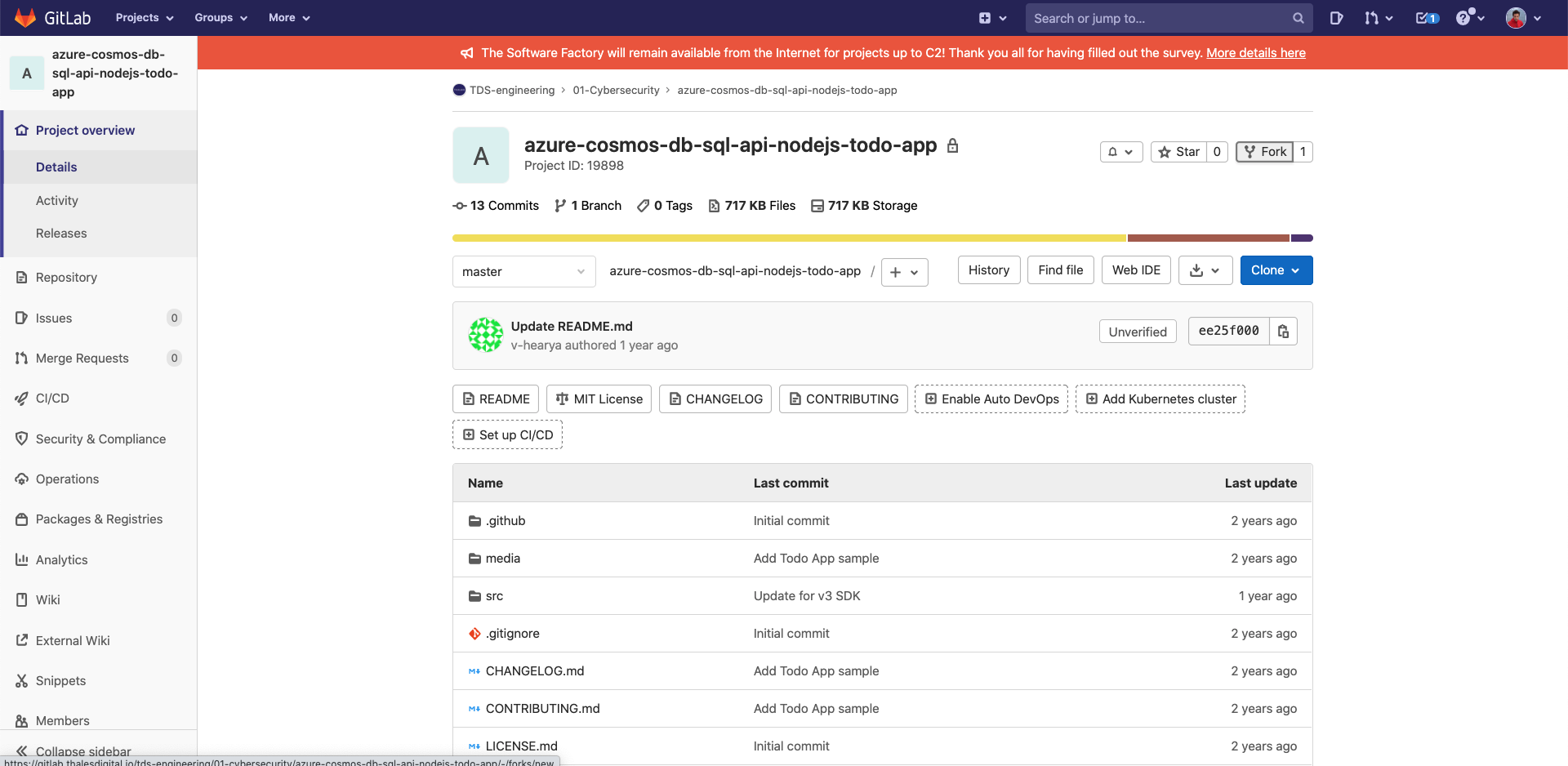

Dockerize your application

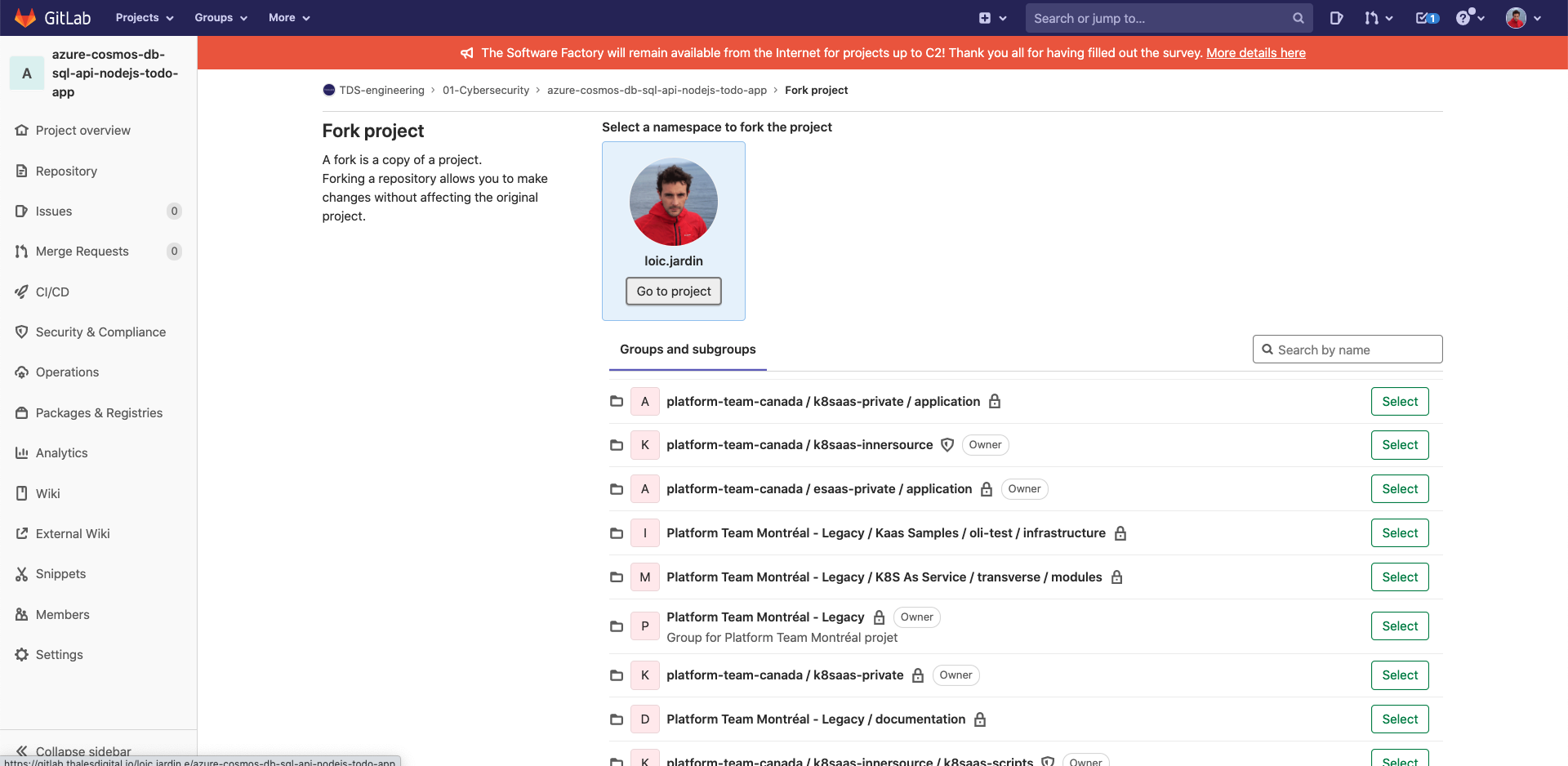

Fork the default project in your personal gitlab space

- Open the azure-cosmos-db-sql-api-nodejs-todo-app project

- Click on "Fork" at the top on the right

- Then "go to project"

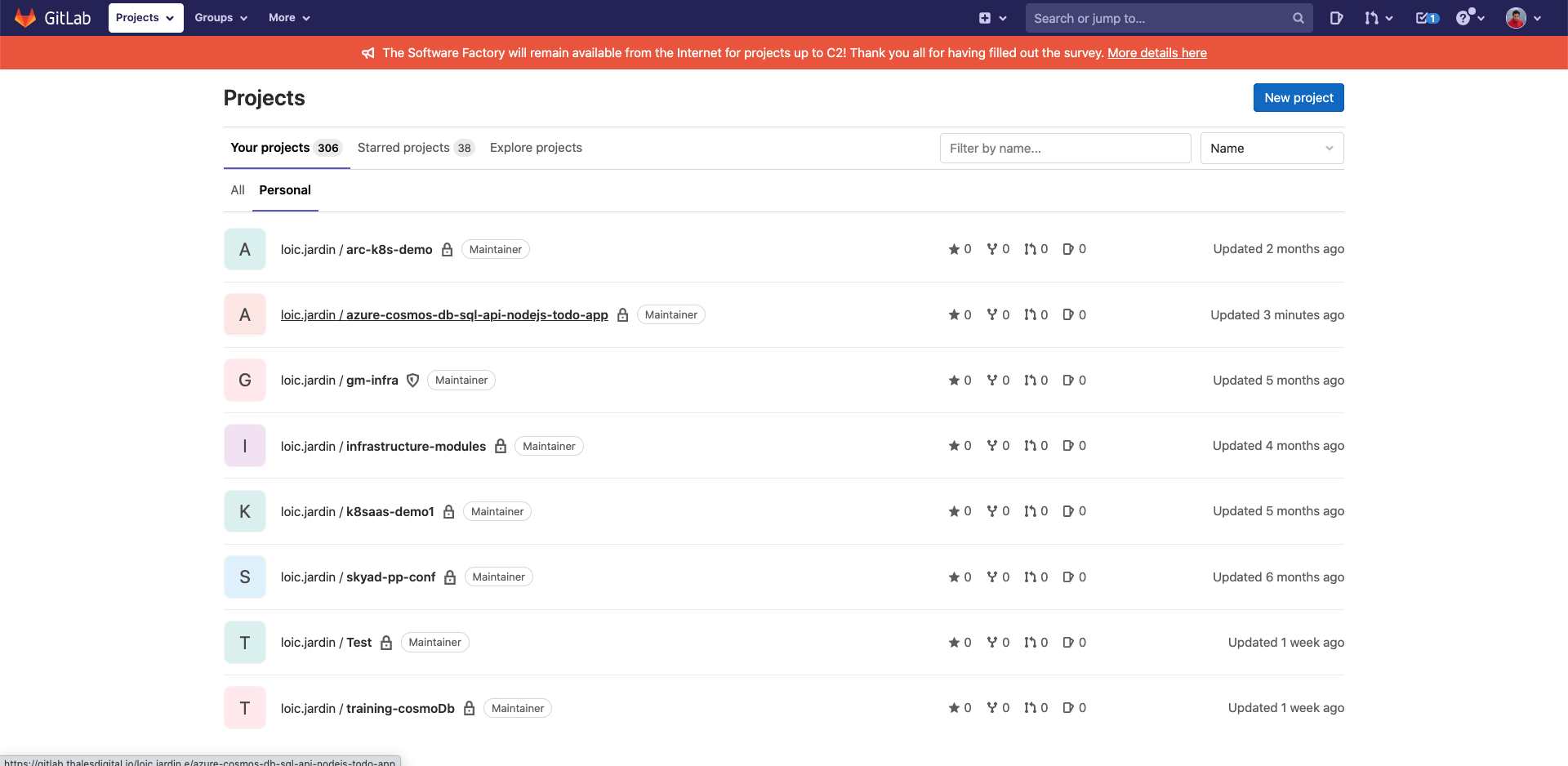

Now you should have a copy of the project in your personal space

Create docker image

- From your laptop or the rebound-vm, clone the project (git clone YOUR_NEW_LINK)

- On gitlab, in your project, click on "Package & registries" then "Container registry"

Prerequisites:

Then, you connect your local device to gitlab

$ docker login registry.thalesdigital.io

Authenticating with existing credentials...

Login Succeeded

# build you image

$ docker build -t registry.thalesdigital.io/loic.jardin.e/azure-cosmos-db-sql-api-nodejs-todo-app .

[+] Building 56.8s (10/10) FINISHED

=> [internal] load build definition from Dockerfile 0.0s

=> => transferring dockerfile: 425B 0.0s

=> [internal] load .dockerignore 0.0s

=> => transferring context: 67B 0.0s

=> [internal] load metadata for docker.io/library/node:14 7.0s

=> [1/5] FROM docker.io/library/node:14@sha256:8eb45f4677c813ad08cef8522254640aa6a1800e75a9c213a0a651f6f3564189 45.1s

=> => resolve docker.io/library/node:14@sha256:8eb45f4677c813ad08cef8522254640aa6a1800e75a9c213a0a651f6f3564189 0.0s

=> => sha256:b53ce1fd2746e8d2037f1b0b91ddea0cc7411eb3e5949fe10c0320aca8f7392b 4.34MB / 4.34MB 5.9s

=> => sha256:8eb45f4677c813ad08cef8522254640aa6a1800e75a9c213a0a651f6f3564189 776B / 776B 0.0s

=> => sha256:d6602e31594f03e9bb465daf54070c8a277e2a13f1460fe2e19de51f1bf59ce2 7.83kB / 7.83kB 0.0s

=> => sha256:76b8ef87096fa726adbe8f073ef69bb5664bac19474c5cce4dd69e08a234903b 45.38MB / 45.38MB 4.0s

=> => sha256:2e2bafe8a0f40509cc10249087268e66a662e437f10e9598a09abb5687038a57 11.29MB / 11.29MB 3.3s

=> => sha256:874d39231651fb4c98b3234515f2f60b151e609fd0e08d25b72cc799a0cc8b35 2.21kB / 2.21kB 0.0s

=> => sha256:84a8c1bd5887cc4a89e1f286fed9ee31ce12dba9f6813cf14082ada3e9ab6f63 49.79MB / 49.79MB 17.8s

=> => sha256:7a803dc0b40fcd10faee3fb3ebb2d7aaa88500520e6295295f5163c4bb48548b 214.35MB / 214.35MB 34.0s

=> => extracting sha256:76b8ef87096fa726adbe8f073ef69bb5664bac19474c5cce4dd69e08a234903b 2.1s

=> => sha256:b800e94e7303e276b8fb4911a40bfe28f46180d997022c69bf1ee02fb7b86721 4.19kB / 4.19kB 7.2s

=> => extracting sha256:2e2bafe8a0f40509cc10249087268e66a662e437f10e9598a09abb5687038a57 0.4s

=> => extracting sha256:b53ce1fd2746e8d2037f1b0b91ddea0cc7411eb3e5949fe10c0320aca8f7392b 0.2s

=> => sha256:8e9f42962912ef3336835eb1b4783e43d07e5f5b8e805f9c1aa1dfafdfedc256 34.59MB / 34.59MB 20.7s

=> => sha256:cc1c1f0d8c868c02d7b63a44b4b3653aed9f818614dbb830005e524b284cd6f9 2.38MB / 2.38MB 18.2s

=> => extracting sha256:84a8c1bd5887cc4a89e1f286fed9ee31ce12dba9f6813cf14082ada3e9ab6f63 2.6s

=> => sha256:a42c31ab44dd27f101c2b2f80be44a52363a7c9d41690746a2c88913b9bb2668 294B / 294B 18.4s

=> => extracting sha256:7a803dc0b40fcd10faee3fb3ebb2d7aaa88500520e6295295f5163c4bb48548b 8.5s

=> => extracting sha256:b800e94e7303e276b8fb4911a40bfe28f46180d997022c69bf1ee02fb7b86721 0.1s

=> => extracting sha256:8e9f42962912ef3336835eb1b4783e43d07e5f5b8e805f9c1aa1dfafdfedc256 1.7s

=> => extracting sha256:cc1c1f0d8c868c02d7b63a44b4b3653aed9f818614dbb830005e524b284cd6f9 0.2s

=> => extracting sha256:a42c31ab44dd27f101c2b2f80be44a52363a7c9d41690746a2c88913b9bb2668 0.0s

=> [internal] load build context 0.1s

=> => transferring context: 252.44kB 0.1s

=> [2/5] WORKDIR /usr/src/app 0.2s

=> [3/5] COPY package*.json ./ 0.0s

=> [4/5] RUN npm install 4.0s

=> [5/5] COPY . . 0.0s

=> exporting to image 0.3s

=> => exporting layers 0.3s

=> => writing image sha256:fce100c9ace07398542a68409a844f9c5b70a85b794a7ad8108b8d21a107acf4 0.0s

=> => naming to registry.thalesdigital.io/loic.jardin.e/azure-cosmos-db-sql-api-nodejs-todo-app 0.0s

Use 'docker scan' to run Snyk tests against images to find vulnerabilities and learn how to fix them

# check the image

$ docker images

REPOSITORY TAG IMAGE ID CREATED SIZE

registry.thalesdigital.io/loic.jardin.e/azure-cosmos-db-sql-api-nodejs-todo-app latest fce100c9ace0 About a minute ago 957MB

# push the image to gitlab

$ docker push registry.thalesdigital.io/loic.jardin.e/azure-cosmos-db-sql-api-nodejs-todo-app

Using default tag: latest

The push refers to repository [registry.thalesdigital.io/loic.jardin.e/azure-cosmos-db-sql-api-nodejs-todo-app]

df37407007f8: Pushed

3ed96b637e72: Pushed

ba01c049f3ae: Pushed

910123b231de: Pushed

d5cad505e02a: Pushed

da4ae655ef00: Pushed

1a967be4a8fd: Pushed

33dd93485756: Pushed

607d71c12b77: Pushed

052174538f53: Pushed

8abfe7e7c816: Pushed

c8b886062a47: Pushed

16fc2e3ca032: Pushed

latest: digest: sha256:34c28924a755276361c7c074466931783349691be1c8014c543926fb3d80c0b1 size: 3051

allow kubernetes to download docker image

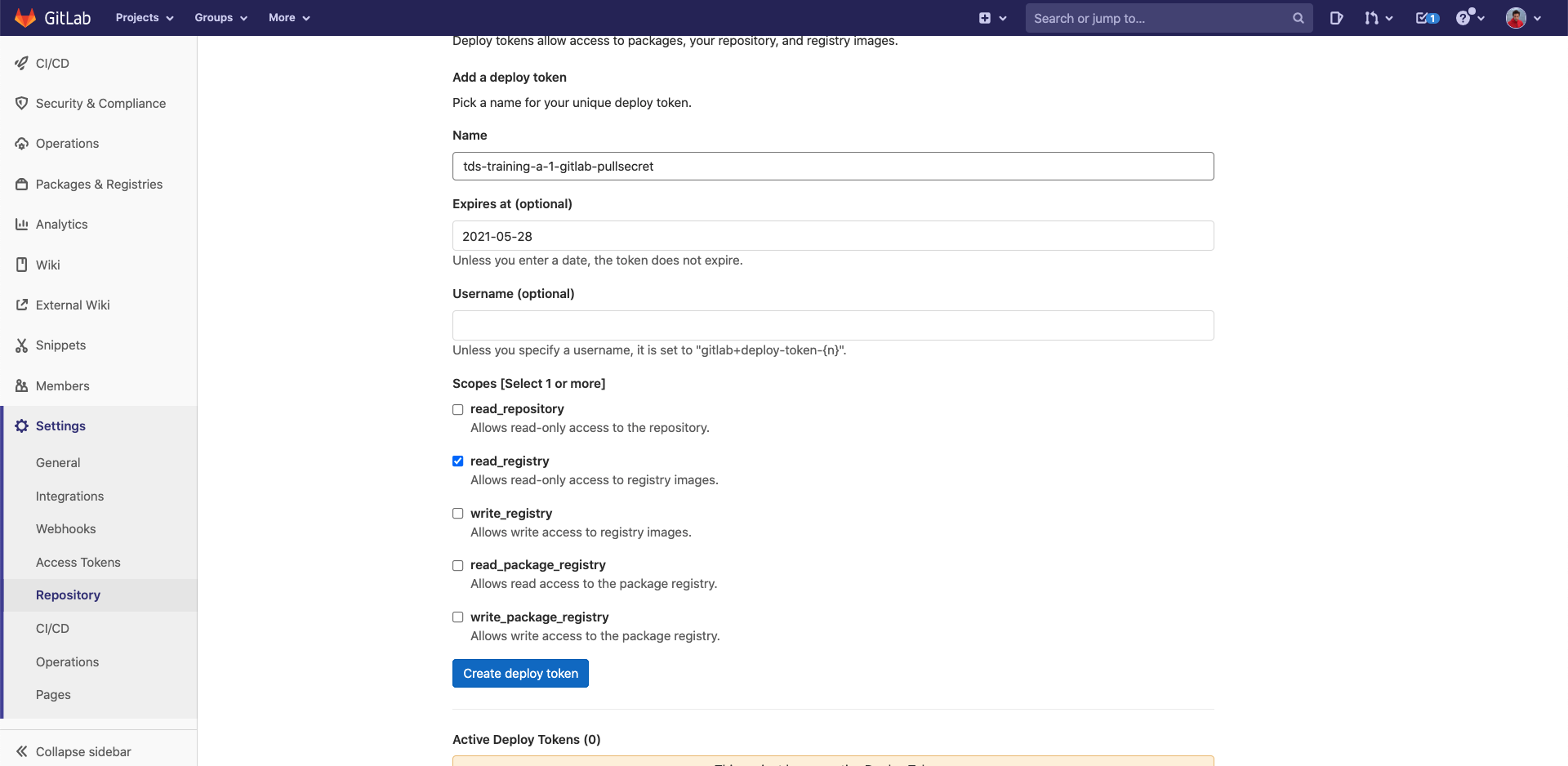

In your gitlab project:

- Click on "Settings", then "Repository", then "Deploy tokens"

Fill the fields with the following info:

- Name:

tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-gitlab-pullsecret - Expires at: precise a date

- tick "read_registry"

- Click on "Create deploy token"

Now copy the username and the token.

We are going to create a secret into kubernetes that contains the gitlab token

source: Kubernetes official documentation

$ kubectl create secret docker-registry tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-gitlab-pullsecret --docker-server=registry.thalesdigital.io --docker-username=TOKEN_USERNAME --docker-password=TOKEN_VALUE

secret/tds-training-a-1-gitlab-pullsecret created

This token will be used by kubernetes to pull your image in the next steps.

Deploy the application into kubernetes

First clone the helper

git clone https://gitlab.thalesdigital.io/platform-team-canada/k8saas-innersource/session-5-step4-kubernetes-files.git

You should have a file: azure-cosmos-db-sql-api-nodejs-todo-app-kubernetes.yml

apiVersion: apps/v1

kind: Deployment

metadata:

name: azure-cosmos-db-sql-api-nodejs-todo-app

spec:

replicas: 1

selector:

matchLabels:

app: azure-cosmos-db-sql-api-nodejs-todo-app

template:

metadata:

labels:

app: azure-cosmos-db-sql-api-nodejs-todo-app

spec:

imagePullSecrets:

- name: <YOUR_GITLAB_PULL_SECRET>

containers:

- name: azure-cosmos-db-sql-api-nodejs-todo-app

image: <YOUR_IMAGE>

ports:

- containerPort: 3000

env:

- name: HOST

value: "<YOUR_COSMODB_URI>"

- name: AUTH_KEY

value: "<YOUR_COSMODB_PRIMARY_KEY>"

---

apiVersion: v1

kind: Service

metadata:

name: azure-cosmos-db-sql-api-nodejs-todo-app

spec:

type: ClusterIP

ports:

- port: 3000

selector:

app: azure-cosmos-db-sql-api-nodejs-todo-app

Then update this file with:

<YOUR_GITLAB_PULL_SECRET>:tds-training-<LETTER_OF_THE GROUP>-<ID_TEAM>-gitlab-pullsecret<YOUR_COSMODB_URI><YOUR_COSMODB_PRIMARY_KEY><YOUR_IMAGE>: should look likeregistry.thalesdigital.io/<YOUR_GITLAB_ID>/azure-cosmos-db-sql-api-nodejs-todo-app

Once updated:

$ kubectl apply -f azure-cosmos-db-sql-api-nodejs-todo-app-kubernetes.yml

deployment.apps/azure-cosmos-db-sql-api-nodejs-todo-app created

service/azure-cosmos-db-sql-api-nodejs-todo-app created

# then test with a port forward

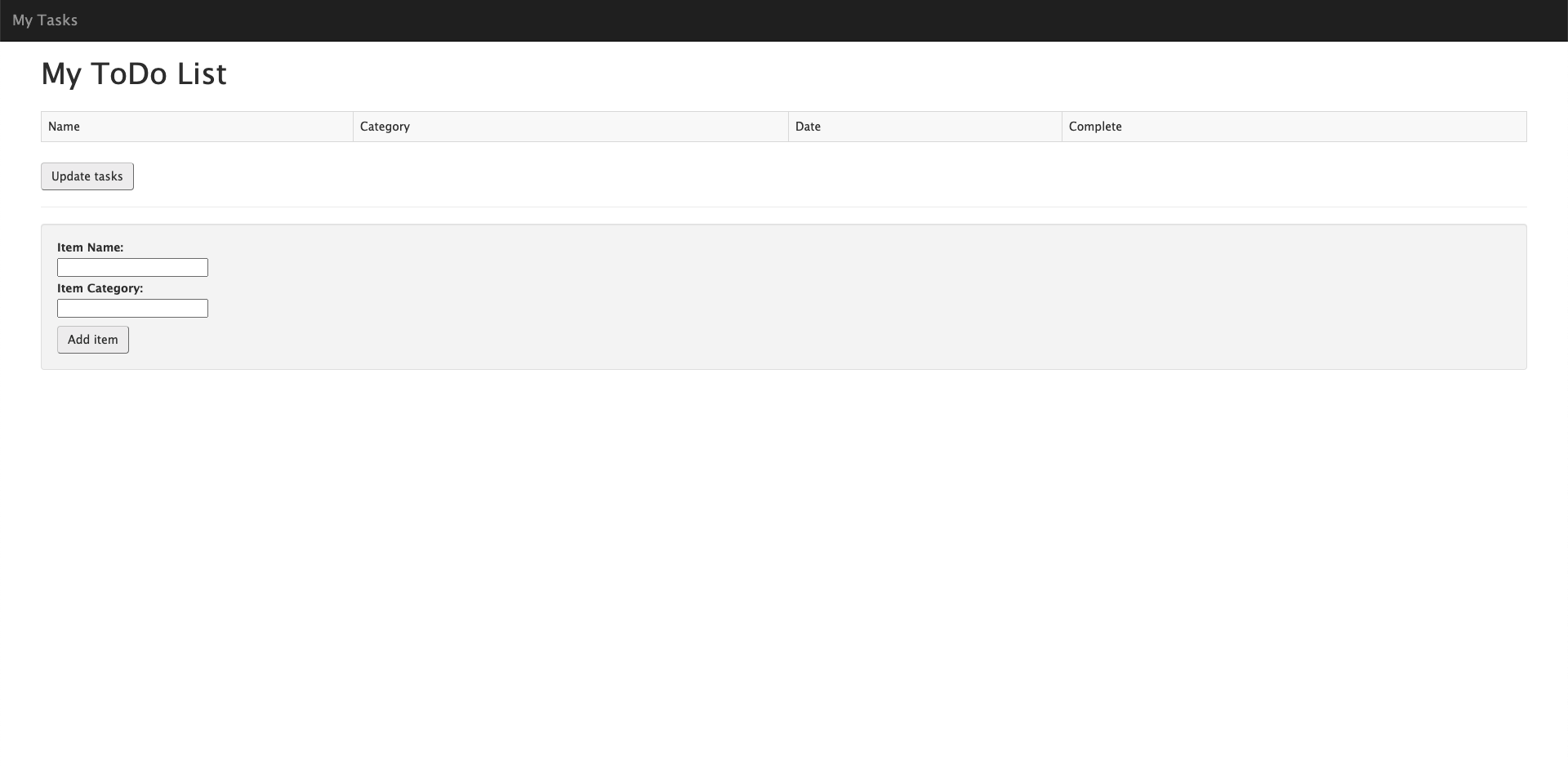

$ kubectl port-forward pods/<YOUR_POD> 3000:3000

Open localhost:3000 with your favorite browser

You see:

Next Step

- Secure your application using Pomerium